I Replaced JSON with TOON in My LLM Prompts and Saved 40% on Tokens. Here's How published: false

Hey! I'm Andrey, a frontend developer at Cloud.ru, and I write about frontend and AI on my blog and Telegram channel. I work with LLM APIs every day. And every day I send structured data into conte...

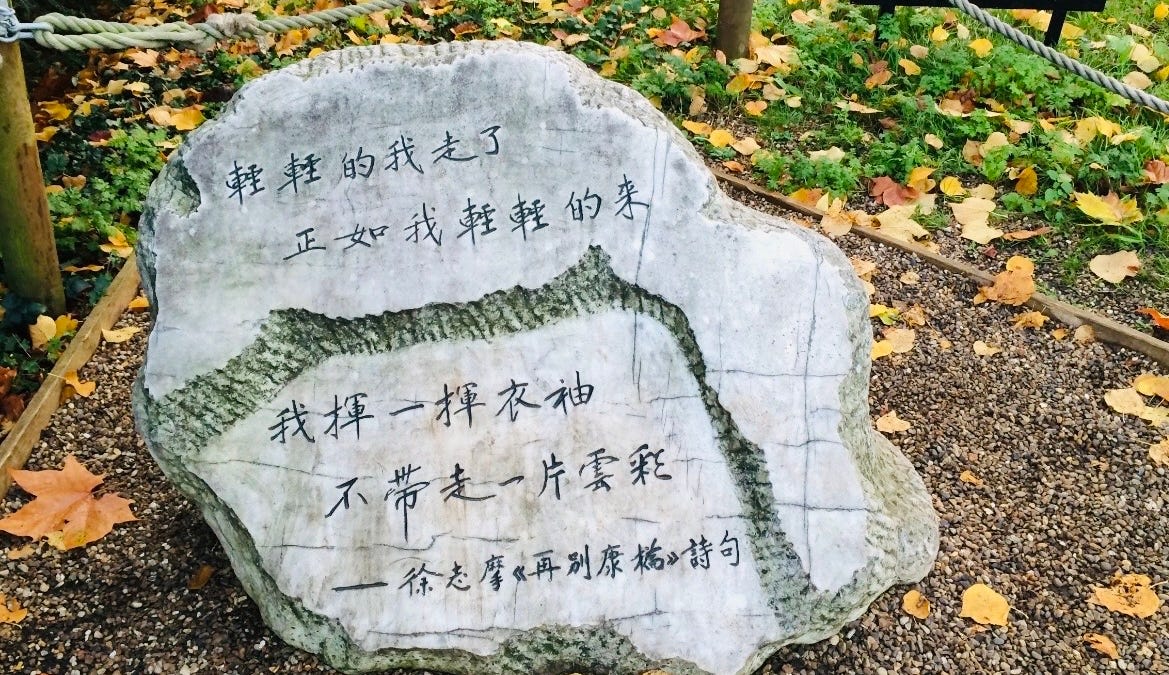

Source: DEV Community

Hey! I'm Andrey, a frontend developer at Cloud.ru, and I write about frontend and AI on my blog and Telegram channel. I work with LLM APIs every day. And every day I send structured data into context: product lists, logs, users, metrics. All of it - JSON. All of it - money. At some point I calculated how many tokens in my prompts go to curly braces, quotes, and repeated keys. Turns out - a lot. Way too much. Then I tried TOON. Here's what happened. The Problem: JSON Is a Generous Format Take a typical case. You're building a RAG system or an AI assistant that analyzes data. Your prompt pulls in a list of 50 records. Here's one record in JSON: {"id": 2001, "timestamp": "2025-11-18T08:14:23Z", "level": "error", "service": "auth-api", "ip": "172.16.4.21", "message": "Auth failed for user", "code": "AUTH_401"} Now multiply by 50. Each record repeats 7 keys: "id", "timestamp", "level", "service", "ip", "message", "code". Plus quotes around every key and string value. Plus curly braces. Plus